WSJ October 19, 2019

How Steam and Chips Remade the World

Cheap energy powered an economic revolution in the 18th century, and cheap information in the 20th.

By John Steele Gordon | 796 words

Not all inventions are equal. While the wheeled suitcase was a great idea, it didn’t change the world. But invent a technology that causes the price of a fundamental economic input to collapse, and civilization changes fundamentally and quickly. Someone born 250 years ago arrived in a world that, technologically, hadn’t changed much in centuries. But if he had lived a long life, he would have seen a new world.

For millennia there had been only four sources of energy, all expensive and limited: human muscle, animal muscle, moving water and air. Thomas Newcomen invented the steam engine in 1712, but its prodigious fuel consumption severely limited its utility. Then in 1769 James Watt greatly improved it, making the engine four times as fuel-efficient. In 1781 he patented the rotary steam engine, which could turn a shaft and thus power machinery.

The steam engine could be scaled up almost without limit. The price of energy began a steep decline that continues to this day.

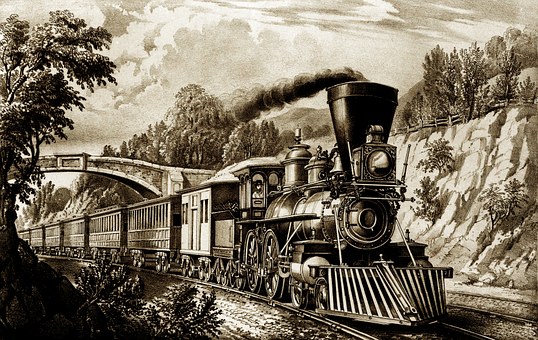

As factories came to be powered by steam, the price of goods fell, sending demand to the sky. When the steam engine was mounted on rails, overland transportation of people and goods became cheap and quick for the first time in history. Andrew Jackson needed nearly a month to get from Nashville, Tenn., to Washington by carriage for his inauguration in 1829. By 1860 the trip took two days.

Quick overland transportation made national markets possible. Businesses seized the opportunity and benefited from economies of scale, sending demand up even more as prices declined further. The collapsing cost of wire and pipes allowed such miracles as the telegraph, indoor plumbing and gas lighting to become commonplace. Enormous new fortunes came into being.

The age dominated by cheap energy lasted until the mid-20th century. Steam had been supplanted by electricity and the internal combustion engine, but someone from 1860 would have mostly recognized the technology of 1960, however dazzling its improvement. Then another world-changing technology emerged.

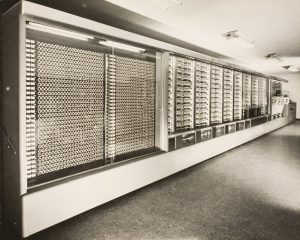

Before the 1940s the word “computer” referred to people, usually women, who calculated such things as the trajectories of artillery shells. The first programmable computer, Eniac, was powered up for work on Dec. 10, 1945. It was 2,352 cubic feet, the size of a large school bus, with 20,000 vacuum tubes that sucked up 150 kilowatts of power. But it was 1,000 times as fast as any electromechanical calculator and could calculate almost anything.

Photograph from the Mark I photo album (Lib.1964). Mark I (Automatic Sequence Controlled Calculator), complete view from the left, finished with Bel Geddes case.

Transistors soon replaced vacuum tubes, shrinking the size and power requirements of computers dramatically. But there was still a big problem. The power of a computer depends not only on the number of transistors, but also the number of connections among them. Two transistors require only one connection, six require 15, and so on.

While transistors could be manufactured, the connections had to be made by hand. Eniac had no fewer than five million hand-soldered connections. Until the “tyranny of numbers” could be overcome, computers would, like Newcomen’s steam engine, remain very expensive and thus of limited utility.

Had every computer on earth suddenly stopped working in 1969, the average man would not have noticed anything amiss. Today civilization would collapse in seconds. Nothing more complex than a pencil would work, perhaps not even your toothbrush.

What happened? In 1969 the microprocessor—a computer on a silicon chip—was developed. That overcame the tyranny of numbers by creating the transistors and the connections at the same time. Soon the price of storing, retrieving and manipulating information began a precipitous decline, as the price of energy had two centuries earlier. Computing power that cost $1,000 in the 1950s costs a fraction of a cent today.

The first commercial microprocessor—the Intel 4004, introduced in 1971—had 2,250 transistors. Today some microprocessors have a million times as many, making them a million times as powerful but only marginally more expensive.

Microprocessors began to appear everywhere. Today’s cars have dozens of them, controlling everything from timing fuel injection to warning when you stray out of your lane. Even money is now mostly a plastic card with an embedded microprocessor. As the railroad was for the steam engine, the internet is the microprocessor’s most significant subsidiary invention. It revolutionized retailing, news distribution, entertainment, communication and much more.

Like cheap energy, cheap information has created enormous new fortunes, ineluctably increasing wealth inequality. But also like cheap energy, the source of those fortunes has given nearly everybody a far higher standard of living.

To understand how profound the microprocessor revolution has been, consider this. The great science writer Arthur Clarke once noted that any sufficiently advanced technology is indistinguishable from magic. A man from half a century ago would surely regard the now-ubiquitous smartphone as magic.

Mr. Gordon is author of “An Empire of Wealth: The Epic History of American Economic Power.”